COMPUTE/COMPETE #3

What does Sovereign AI even mean?

This week’s instalment of COMPUTE/COMPETE entails a deep dive into 3 particular case studies of Sovereign AI: Europe’s bet on excellence, India’s bet on impact, and, finally, reality’s bet on power.

First, an interview with Stan van Baarsen, co-author of the Dutch National AI Delta Plan. Then, learn why a meme has made more impact on the internet than the recent Indian AI Summit. Lastly, what does the recent Department of War–Anthropic feud mean for the fate of non-AI powers going forward?

Enjoy!

P.S. COMPUTE/COMPETE is a project in collaboration with European Guanxi. You can also find the series on their Substack, along with contemporary analysis on EU-China relations.

EUROPE’S BET ON EXCELLENCE

What comes to mind when you think of Europe? Delicious food, old buildings, and a deep history—or simply colonialism, perhaps. Yet, what about European tech, startups, and AI? Well, if you have just as much free time as I do, your mind may immediately jump to stories of overbearing regulation and startups fleeing to the capital-rich United States.

These were just the types of narratives I wished to interrogate and, hopefully, challenge (not the colonialism bit) when the opportunity to interview a bright young mind in the European AI space and co-author of the Dutch National AI Delta Plan fell into my lap. And so, with great excitement, I was placed into a group our mutual friend jokingly titled ‘The Sovereign AI Bros’, scheduled a time to chat, and sat down to discuss everything Dutch/European AI with Stan van Baarsen, AI Fellow at the AI Plan Institute.

Literally working on your country’s Sovereign AI strategy is an incredible position to be in for someone so young. How did you get there?

A LinkedIn message. (I guess fairytales do exist).

Our conversation began with Stan’s background. He began studying computer science in university before realizing he had a strong desire to work with people, leading him to pivot to international business administration. Yet, he hesitated to forego a technical background, and so pivoted once more to studying both at the same time. (’Luckily education was cheap’, he somewhat smugly noted to my shocked American mind).

Following graduation, Stan pursued a Master’s in tech policy at Cambridge, where he developed an interest in innovation ecosystems around institutions like universities while working on a gov-tech startup with my co-podcaster.

All seemed great—that is, until he realized the onset of the AI Revolution was rapidly dawning, and his home country of the Netherlands was doing too little to prepare.

After two Dutch thinkers published an article extolling the necessity of a national AI plan and it subsequently made the rounds on the internet, Stan, inspired, did the most logical next step: he DM-ed them on LinkedIn and joined their team.

When, shortly after, a new Minister of Economic Affairs got into office and wanted to develop a substantial national AI Strategy, he turned to this newly formed group of AI experts and asked them to draft what such a strategy would look like for the Netherlands.

And so, suddenly, Stan was helping write the Dutch National AI Strategy. Easy, right?

It makes sense to create a National AI Strategy for a great power like the U.S., China, or even EU-wide, but what would such a strategy’s goal be for a country like the Netherlands?

Well, the Dutch strategy cannot be separated from the EU strategy, Stan began. Au contraire, EU-wide strategies are meant to be pursued in tandem with Member State policies, each according to its capabilities.

The European Union, for example, has significantly more resources and convening ability than does any individual Member State, and so should pursue and lead the largest-scale initiatives. These range from regulatory coordination to resource-intensive infrastructure development like data centres. Stan even mentioned a competition that will provide funding and compute to European labs in an effort to incubate domestic frontier AI capabilities reminiscent of South Korea’s ongoing ‘AI Squidgame’.

Member States, on the other hand, are able to operate with much more focus, agility, and speed. While the EU rules by consensus, individual governments can more easily implement policies with specific, targeted outcomes. For Stan, this first means national governments should champion initiatives seeking to foster domestic innovation and engender local talent by strengthening ecosystems like universities. Second, Member States should leverage their on-the-ground expertise and closer feedback-loop to support industries of national strength/specialty, which the EU cannot keep up with on a union-level due to niche scope and pace.

Individually, each component offers just one piece of the larger puzzle, but together, he is hopeful in a European Sovereign AI Strategy. The thing is—it just has to pick its battles. ‘When a company reaches AGI [Artificial General Intelligence, A.K.A. Machine God]—if that happens, it won’t be European’.

If the goal isn’t to pursue superintelligence, then what is it? If developing economies translate ‘Sovereign AI’ as a path to developing a domestic digital economy, then what does ‘Sovereign AI’ mean for a developed economy like the Netherlands?

Ever practical, Stan began with what he deemed already lost. According to him, Europe had already lost the race for frontier foundation models—think ChatGPT, Claude, or DeepSeek—as well as some domain-specific AI models like Cursor and Harvey.

Yet, he pivoted, there were still plenty of sectors that don’t yet have an established domain-specific AI provider—what in the industry is called Vertical AI. Vertical AI models or agents are specially trained according to the data and needs of a specific industry or expertise. They can oftentimes perform better than generic foundation models in particular tasks, though, of course, they require a foundation model as a foundation.

Here, Stan believes, lies the AI opportunity for the Netherlands. In sectors where the country has particular comparative advantages, such as the biotech and life sciences industries, fintech, and agritech, he thinks the government should do the utmost to foster the environment for a Dutch champion to emerge through facilitating and encouraging AI adoption and innovation. Another particular area of strength is in semiconductor technologies, centered around the lithography giant, Europe’s highest value company and lynchpin to the entire global chip supply chain: ASML.

As such, I inferred that, while developing economies see Sovereign AI as a once-in-a-lifetime opportunity to rapidly develop the infrastructure needed to foment a local digital economy—climbing the value chain at least from natural resource exporter to data aggregator and manipulator, it seems developed economies like the Netherlands are confronting Sovereign AI as the make-or-break moment to lock in or defend their place at the top of the global value chain. As the world, or at least France, is now learning with regard to semi-successful attempts to impose child safety-related digital regulation, a lot of the decision-making sovereignty, and most of the money, lies with which country’s companies crown the digital service value chain. Without a domestic Tech Titan, one risks becoming a digital rule-taker.

To make this point, Stan mentioned a case in which the Netherlands turned down the construction of a potential Google data centre—a decision hard to believe among the cacophony of global compute mania. Why? Because there isn’t too much value in compute generation, he said. ‘We’re much more interested in having a new Google R&D lab’.

Okay, so it doesn’t sound too bad for the Netherlands, then, despite all the doom and gloom.

Well, don’t get ahead of yourself.

‘The thought of not having a frontier model in Europe is still scary’, Stan admitted. Beyond the philosophical and political implications inherent in foreign-built AI models, which encode not just good behavior but what ‘good behavior’ means, he sees significant economic ramifications. Though he hopes that, at some point, open-source models will replace the state-of-the-art as ‘good enough’, he fears a potential future where European Vertical AI is built on top of foreign foundation models, resulting in a system of rent extraction. Every time a model does anything, the tithe would have to be paid to the foreign tech companies in the form of API payments.

At the very least, he doesn’t think the U.S. would ever ‘cut off’ access to its models (trust in the corporate bottom line), BUT overreliance and limited competition could lead to scarcity and price-hikes. Not fun.

And so, after some back-and-forth mutually admonishing the idea of ‘strategic autonomy’ (read: ignoring market realities), Stan concluded positioning the goal of the Netherlands, and I think it’d be fair to say Europe, as maintaining a sort of ‘strategic relevance’. Perhaps not dominating every layer of the AI stack, but at least excelling where they’re strongest. Who could ask for any better?

Thank you, Stan. And, good luck, Netherlands! I’m rooting for you.

INDIA’S BET ON IMPACT

A major conference on AI just ended in India, and you’d be forgiven for having never heard of it.

Even worse, you may have unknowingly glimpsed it through a popular photo going around social media of OpenAI’s CEO Sam Altman and Anthropic’s CEO Dario Amodei—two people with potentially extremely outsized influence on the future of humanity—refusing to hold hands. The planned gesture, featuring several other top CEOs such as Google’s Sundar Pichai and even India’s Prime Minister Narendra Modi on the stage, was meant to signal the conference’s and thus India’s position on AI: All Inclusive.

Instead, one moment of pettiness from two academic researchers turned world famous businessmen spotlighted what Kevin Xu calls AI’s ‘zero-sum problem’ (only one company can win the ‘race’) and overshadowed India’s moment in the limelight. What was supposed to be a crowning moment positioning India as a ‘major AI hub’, according to India’s technology minister, Ashwini Vaishnaw, instead highlighted the outsized role of the AI Titans in charting the course for the future of the technology and how it will be used, with India’s Modi cut out of frame in half the trending photos of the event.

Yet, as seen in this newsletter’s last episode on Malaysia, narratives very often hide real impact and initiatives. So, intrigued by the surely lucrative and influential deals struck behind the colorful drama, I set out to answer two questions:

What does AI mean for India?

How does the country hope to achieve it?

Ok, I’ll listen. But, what do you mean by ‘What does AI mean for India?’ How can AI mean different things for different countries?

Well, what do you think of when you think of AI and its potential effects on a country? It’s nebulous, right? Inherent in the technology, especially in this current moment, is uncertainty. Leading researchers may only be able to tell you with a little more clarity than the average person what the future potential of the technology will be. Is there an AI bubble? The truth is: no one knows. If AI proves to be just a useful tool—then, perhaps. Data centers will certainly be useful, new digital sectors will certainly be created, but these giant tech startups may not be able to justify their sky-high valuations. Taking the opposite tack, if AI drastically changes how we work and what’s considered possible, then it’s not hard to see the return as infinite. For some, that bet is too good to pass.

Now, in addition to the certain uncertainty, consider the different realities of countries’ economies. The U.S. has deep financial markets, well-oiled startup pathways, and a substantial digital service economy. China has the next best capital base—though nothing compared to the U.S., sophisticated industrial policy strategies, and an unmatched manufacturing ecosystem. The extent of the capability to develop the technology and its potential impact on the economy thus varies according to each country’s conditions. (For perhaps the best explanation of China’s unique ‘AI conditions’, I recommend you read Grace Shao’s article here).

So, where does India fit in, then?

Well, for one, the country has a significant digital economy, though it sits at the lower-to-middle end of the value chain. This both presents a sizable opportunity and an acute risk. India has a young, digital-native population—not to mention the largest population in the world. According to the telecom firm Ericsson, the Indian subcontinent already uses more mobile data than any other region globally. And, it’s not just scale, but depth: a workplace survey from education giant Emeritus found that 96% of Indian professionals used GenAI tools at work, compared to 81% in the U.S.. Beyond the government’s hope to maintain its economic position as the global digital offshore location of choice, if given the proper resources and incubated within the right ecosystem, tech-forward individuals could take advantage of the advent of the new technology to create frontier, high-value companies that are competitive not just in emerging markets, but everywhere.

The upside, according to the government’s own plan: 6 million people are already employed in the tech and AI ecosystem, with the sector projected to cross $280 billion in revenue this year. And, if AI takes off, it could add $1.7 trillion to India’s economy by 2035. Compare that with India’s current $4.5 trillion GDP.

Now, the downside, according to practical, cold-hearted investors: AI’s first automation-prompted killing blow in the job market may be the exact types of jobs India’s digital sector dominates. From the millions employed in low-end operations like call centers and back-office services to higher-value, traditional software giants—AI is poised to remake the entire digital service industry. After Anthropic in early February rolled out new tools for its Claude Agent, such as legal and financial analysis, investors reacted with a large-scale sell-off of companies traditionally assumed to have moats. In one month, an ETF that broadly tracked software companies lost 27% of its value. As the shock reverberated, Indian tech-service giants like Infosys and Tech Mahindra similarly lost tens of billions of dollars, up to 7% of their market share.

As such, the Sovereign AI push in India is just as, if not more, economic than it is political.

That does seem drastic. What is the country doing, then, to take advantage of this moment of equal potential and peril?

And, that gets to the second question: What resources does the state have to facilitate early AI adoption and innovation across the country? The answer is not much. In 2024, India launched a $1.1 billion AI infrastructure fund to be spent over the next five years. Compare that with the estimated $226 billion in funding that went to AI firms from venture capital alone in 2025. No, alone, it does not have the resources. But, luckily, it isn’t alone.

India is quickly becoming a vital pillar in American tech giants’ strategies. Partially due to the large, digital-native population—many of whom speak English—the country has become the world’s largest market for GenAI app downloads in 2025. Though the return from the market is obviously less than from high-wage American consumers, it provides a different, potentially more lucrative resource: data. After the entire internet was used to train previous generations of AI, high-quality data has emerged as one of the most important bottlenecks in frontier model development. A numerically large consumer base, then, is a gold mine.

Leveraging this bait, the Indian government instituted two policies in a bid to capture value from the AI wave for the Indian economy. First, it passed the Digital Personal Protection Act, which regulated the handling of all data generated within the country as well as required financial, telecom, and health data to be stored exclusively within the country. Second, it announced plans to give tech firms a 20-year tax break on overseas revenue gleaned from data services based in India. Both policies were designed to attract external data centre funding.

And, they have worked unbelievably well. In the past year, Google, Microsoft, and Amazon have collectively announced $67.5 billion in data centre and operations expansion investments in the country. In addition, American AI leaders have partnered with Indian tech giants to pair cutting-edge tech with local reach. Just in the past few months, OpenAI announced its collaboration with Tata Group, Anthropic partnered with Infosys, and the Adani Group deepened its collaboration with Google and Microsoft.

While the government has harnessed large domestic conglomerates to power the AI buildout, it sees its own role as ensuring the creation of open, public infrastructure, such as subsidized computing power for startups and research groups at rates under $1 an hour. This focus on digital public infrastructure (DPI), such as the Aadhaar system, a digital ID system that also works as a unified payment interface enabling transactions for no fee, aims to diminish the barriers to entry for small, local applications.

And, that seems to be India’s chosen niche—or ‘what AI means’ for the country. Cheap, adaptable AI models and applications that solve real-world problems for institutions under strain. This is the angle India believes will define ‘Global South AI’. AI for the countries that need its potential most, but can’t afford a $200 Claude subscription. Here, apps like Adalat AI, a transcription tool for legal proceedings that’s fluent in India’s many languages, come into play. According to the company’s CTO, Arghya Bhattacharya, ‘One of the things we strongly believe is you don’t need large models. You need models that are smaller and customized for every setting’.

For now, the country must strike a careful balance, encouraging the disruption necessary for a future, innovative tech industry while managing the risk that same disruption brings to its current, vital digital service economy. Judging by the inability of the two leading AI companies’ heads to merely hold hands, they have their work cut out for them

REALITY’S BET ON POWER

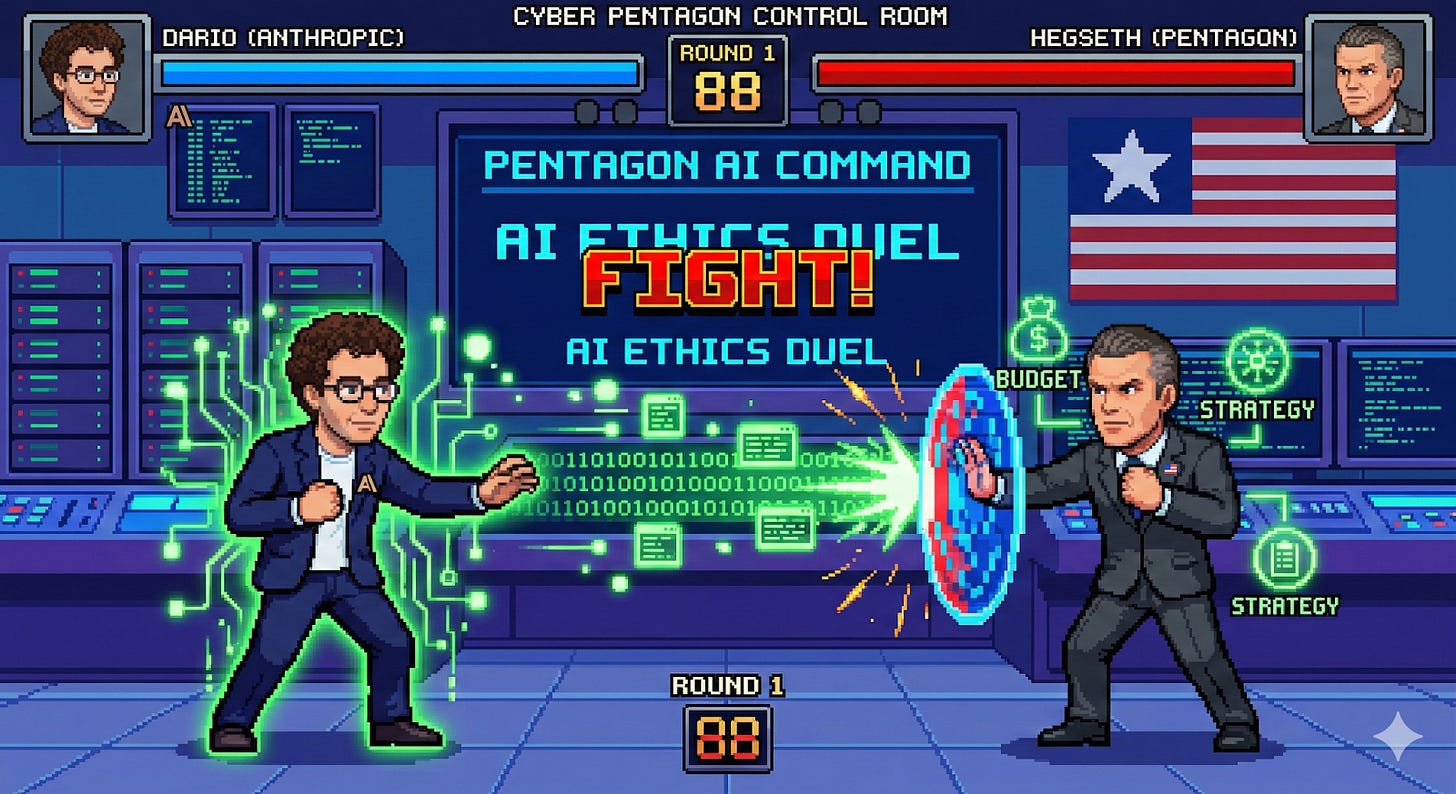

This column would be remiss not to cover the perhaps biggest news in the past week—that is, the biggest news until the U.S. and Israel carried out strikes (but not in a declare war type of way) in Iran. What I’m referring to, if you haven’t forgotten it already, is the other war, the one between the United States Department of War and Anthropic.

Conflict was inevitable, but slow-building. Anthropic prides itself as the first model provider to be integrated into the government’s classified information structure, including the military. It shows maturity in capability and deployment. Yet, tensions sparked when news broke that the U.S. military used Claude in the operation to kidnap Nicolás Maduro, the then-President of Venezuela. Successful deployment is great for marketing, but the episode forced many leading AI figures, who consider themselves both researchers and philosophers, to deal with the ramifications their technology brought into the world.

Even more, Artificial Intelligence as a technology is unique in the ability of model providers to decide how it can be used after creation. Through ‘alignment strategies’—Claude calls theirs a constitution, AI researchers seek to train morality into their models, creating rules of what it should and shouldn’t do. The model shouldn’t, theoretically, ever lose sight of its normative guardrails—even, or perhaps especially, when used by the government. Thus, an impasse ensued when Anthropic’s CEO, Dario Amodei, refused to allow the government to hypothetically deploy its model in mass domestic surveillance and fully autonomous weapons. His reasoning: the law hasn’t yet caught up to the technologies’ capabilities for surveillance, yet the tech is too incapable as of now to be trusted to control a weapon.

Now, it’d be a mistake to think the disagreement is because the government wants to conduct mass surveillance (of course, it would never) or deploy fully autonomous weapons. The principal question here is: Who gets to decide what AI can be used for?

The President of the Council on Foreign Relations, Michael Froman, outlines the uniqueness of the predicament well: ‘This is an extraordinary moment. We live in a free market economy, and firms generally enjoy broad latitude to determine the terms on which they provide their products to the government or anyone else. But we also live in a constitutional republic, one in which the people have vested in the state great power—an effective monopoly on violence—and the democratic process is the sole arbiter of its exercise’. Regardless of the hypothetical use case, the government doesn’t want a corporation to tell it what it can or cannot do.

Since then, the government has supposedly banned the use of Claude, right before striking an agreement with Anthropic’s rival, OpenAI. Meanwhile, the U.S. military reportedly continues to use Claude in the war with Iran.

Beyond the narrative, this episode details the good fortune of the U.S. and the growing risk to non-AI powers that comes with Geopolitical AI. While the U.S. can shirk a frontier model provider over a disagreement in how its model can be used, only to then coerce its use or turn to a different, equally powerful model, other countries may be forced to take what deals they can, and cede what sovereignty they must.

Yet, while the unprecedented ability for model providers to control their technology may lead to unforeseen levels of leverage and dependency, in the end, much is still the same. Since the dawn of humankind and the institution of the state, ‘the strong do what they can, and the weak suffer what they must’.

Sovereign AI, then, is the pursuit of maximizing the ‘can’ while minimizing the ‘must’. Reality is what defines the limits of this.